The Cerebras vs Nvidia debate is reshaping how investors think about the AI chip comparison this year. Cerebras went public on 14 May 2026 at a $95 billion market cap with its Wafer Scale Engine.

Nvidia still owns roughly 90% of AI accelerators and over 40% of data center spend, anchored by the CUDA software moat. This article covers the architecture, benchmarks, and the practical read for anyone holding NVDA stock right now.

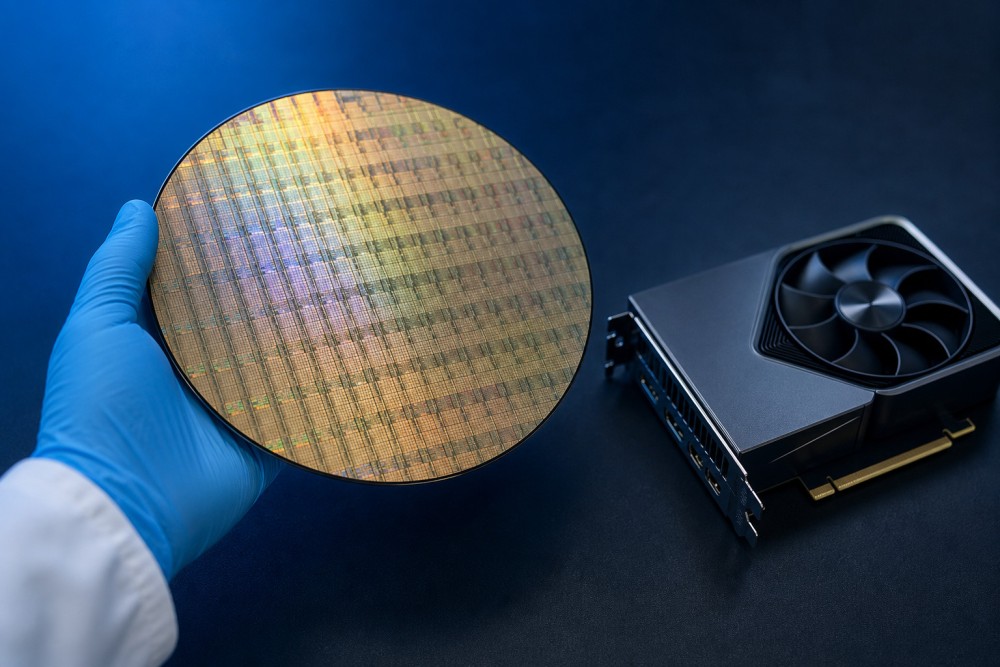

How Wafer Scale Engine Architecture Differs from GPU Clusters

Nvidia builds AI systems by stitching together hundreds or thousands of discrete GPUs in a rack. Off-chip traffic over NVLink and PCIe is the dominant bottleneck for very large language model workloads at hyperscale.

Cerebras takes a different route. The WSE-3 is one chip carved from a 300mm silicon wafer with no inter-die interconnect.

Memory Bandwidth and On-Chip Communication

The WSE-3 packs 4 trillion transistors across 900,000 cores with 44GB of on-chip SRAM at 5nm. According to Tom's Hardware, one wafer delivers 125 FP16 petaflops, theoretically equivalent to about 62 Nvidia H100 GPUs.

Memory bandwidth reaches 21 PB/s, roughly 2,625 times the 8 TB/s on Nvidia's flagship B200 GPU. Cores read and write neighboring memory in a single clock cycle, with zero off-chip hop and zero NVLink congestion penalty.

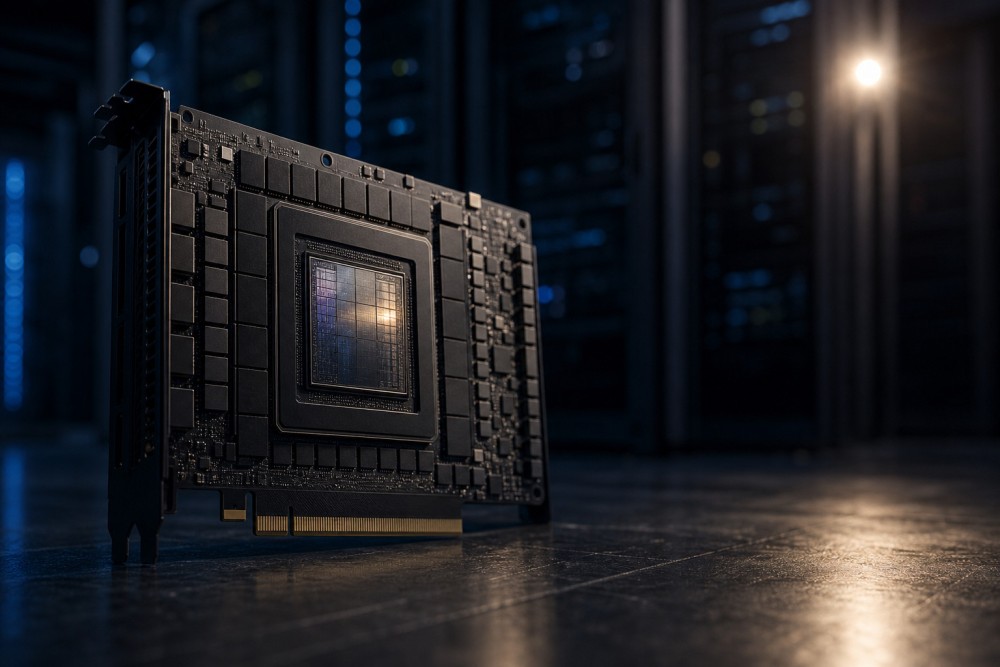

Execution Model: Layer by Layer vs Parallel Sharding

The WSE processes a neural network one layer at a time across the full wafer surface, then moves on to the next layer. GPU clusters split a model into many shards and run pipeline parallelism across many dies, with synchronization overhead at every boundary.

Cerebras wins on synchronization simplicity and raw memory bandwidth. Nvidia wins on workload flexibility and CUDA ecosystem maturity, with the side-by-side silicon numbers below:

| Spec | Cerebras WSE-3 | Nvidia B200 |

|---|---|---|

| Transistors | 4 trillion | 208 billion |

| AI cores | 900,000 | fewer per die |

| On-chip memory | 44 GB SRAM | 192 GB HBM3e |

| Memory bandwidth | 21 PB/s | 8 TB/s |

| Peak compute | 125 FP16 PFLOPS | ~4.5 FP16 PFLOPS |

| Power per system | 23 kW | ~14 kW DGX B200 |

Benchmarks, Customers, and the Real Competitive Threat

Cerebras has posted aggressive numbers against Nvidia's flagship hardware this year on multiple frontier models. On Meta's Llama 3.1 70B the CS-3 hit 2,100 tokens per second per user, roughly 8x the H200 for single-user latency.

On a reasoning workload with 1,024 input and 4,096 output tokens, Cerebras claims the CS-3 runs 21x faster than the DGX B200 system. Total cost of ownership lands 32% below the B200 when capex and energy opex are combined, with each CS-3 system drawing 23 kW.

Customer Roster and Revenue Mix

The customer book has shifted dramatically through the IPO window. Fortune reports G42 dropped from 85% of 2024 revenue to 24% in 2025, as the customer base broadened sharply.

- OpenAI, on a multi-year agreement valued above $20 billion signed in January 2026, anchoring future inference revenue

- Meta Platforms, using Cerebras for select inference workloads on internal Llama deployments at production scale

- Amazon Web Services, listed among newer enterprise accounts in the IPO prospectus alongside other hyperscale interest

- Mohamed bin Zayed University of AI, now the largest single buyer at 62% of 2025 sales

- Mistral, Notion, Perplexity, and AlphaSense across the application-layer cohort building on faster inference

The Honest Threat Assessment for Nvidia

Cerebras delivered $510 million of 2025 revenue and grew 76% year over year, a strong absolute number for a chip start-up entering public markets. Per The Motley Fool, Nvidia delivered $215.9 billion in fiscal 2026, roughly 423 times Cerebras revenue and a different league entirely.

The CUDA software ecosystem keeps developers anchored to NVDA, and Nvidia ships new architectures on a roughly annual cadence with Blackwell next. The realistic outcome is steady share gains for Cerebras in a fast-growing market, with AMD and Broadcom carving adjacent niches.

Conclusion

Cerebras owns the inference speed crown today on memory-bound workloads thanks to wafer scale engine bandwidth. It remains a focused contender rather than a full Nvidia replacement on broad training workloads and the CUDA stack.

Cerebras (CBRS) is already listed on Gotrade. Trade CBRS, NVDA, AMD, and AVGO with fractional shares from $1 and 24/5 US market access.

FAQ

What is the Cerebras Wafer Scale Engine?

The WSE-3 is a single AI chip built from one full silicon wafer with 4 trillion transistors and 900,000 cores, designed to remove the off-chip communication bottlenecks of GPU clusters at scale.

Is Cerebras faster than Nvidia for AI training?

Cerebras has posted inference benchmarks up to 21x faster than Nvidia's flagship B200 on certain large language models, though training comparisons depend heavily on model size, batch shape, and software stack maturity.

Can I buy Cerebras stock on Gotrade?

Cerebras (CBRS) is officially listed on Gotrade's US stock catalog as of May 2026.

Does Cerebras threaten Nvidia's market dominance?

Cerebras is a credible competitor in AI inference but generated only $510 million in 2025 revenue against Nvidia's $215.9 billion, so the realistic outcome is share gains in a growing market, not a takeover.

Which AI chip stocks are tradable on Gotrade today?

Nvidia, AMD, and Broadcom are all available on the Gotrade app for SEA investors who want diversified exposure to the AI accelerator and custom silicon trade right now.